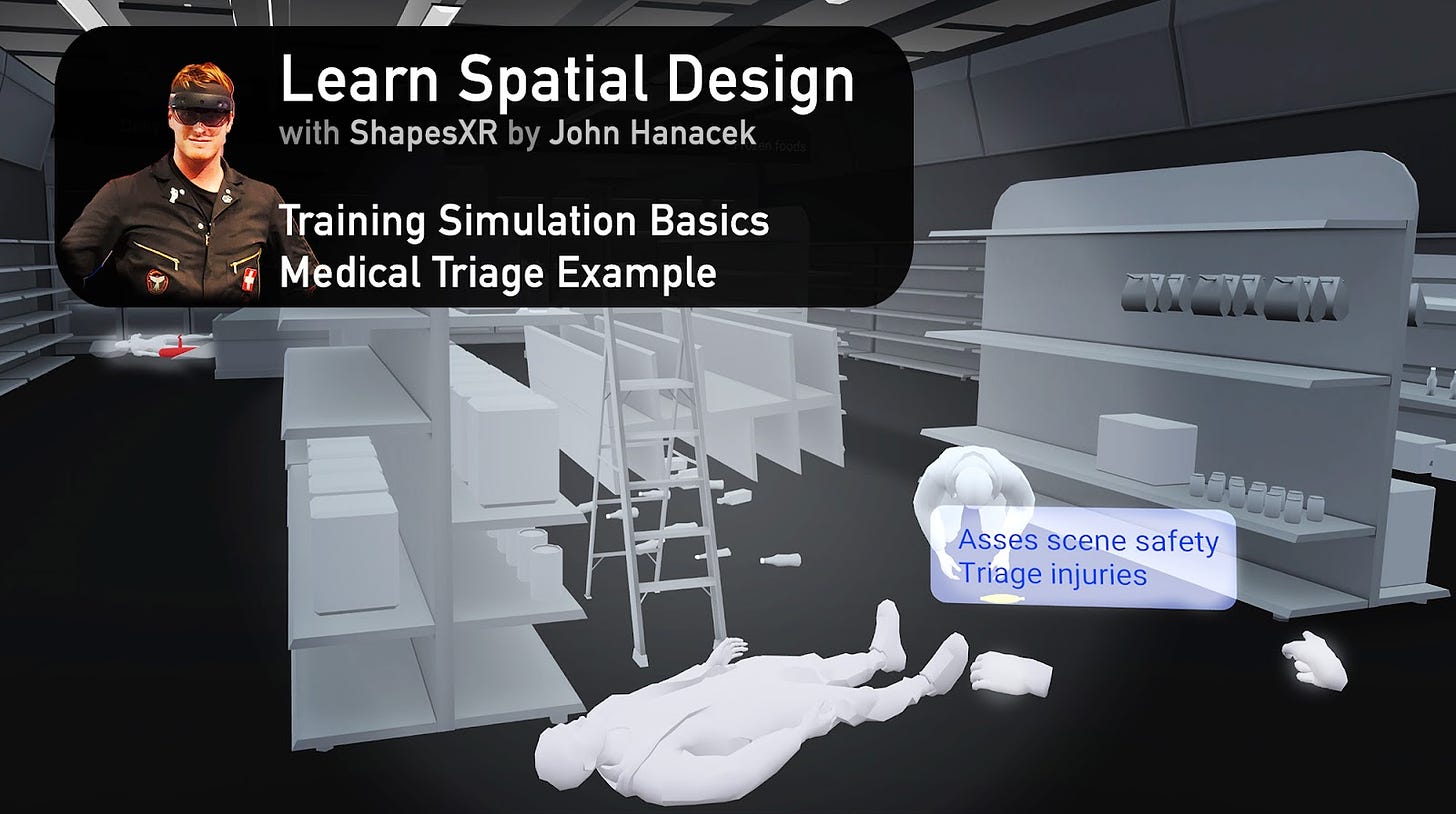

Any procedures can be learned, especially with the help of immersive learning by example. In this article I will share some background for designing any kind of interactive training, and use a specific example using ShapesXR to set up a scene teaching basic concepts of medical triage. Focusing on some unique features of ShapesXR 2.0 we can create an immersive training experience featuring different stages of actions to learn, and spatialized sound cues to ground the experience.

I have a background as a first responder and experience in consulting and creating demos for emergency medical training.

Immersive learning, especially through VR, can greatly enhance the acquisition of any procedural knowledge. The key is to identify the main takeaways for your training program. How detailed should the end users' knowledge be?

Understanding your instructional goals is crucial. Training simulations vary in levels of interaction fidelity and knowledge support during user trials. It's essential to know the environments where users will experience the simulation. This could involve considering room-scale VR versus seated VR, or even Mixed Reality. The aim is to ensure a seamless integration between virtual and physical locations for the most effective training experience.

Instructional Support vs Testing

For educational purposes, supporting the user with hints can help solidify concepts and even be ‘enjoyable’ to use to ensure the user is at their best mentally. For testing, scenarios should be more realistic, and forego hints to test knowledge. Inducing fatigue and stress is even ideal, try to get the user into contact with the actual reality of the stresses they will face. Failing in simulation is a gift that helps you not fail when it counts.

For gathering data there are two main concepts: Quantitative and Qualitative. Quantitative data is numbers-based, countable, or measurable. Qualitative data is interpretation-based, descriptive, and relating to language. Quantitative data tells us how many, how much, or how often in calculations. Qualitative data can help us to understand why, how, or what happened behind certain behaviors.

For an immersive experience, you can build in certain quantitative and qualitative metrics directly into your interactions. Or don’t under-estimate the utility and simplicity of an after action survey that uses a more ‘traditional’ medium like a form. Tracking progress of training is part science and part art, so explore areas where you can fit structured metrics directly into your experience, and also make sure that instructors and participants can give unstructured feedback.

XR Interaction Fidelities

An interaction between user and content can be realized across a spectrum of fidelity. For practical purposes we can consider high, medium and low as breakpoints for how to think of the level of fidelity required to create the optimal UX.

High fidelity interactions are essentially (but not limited to) simulations of the dynamics of reality. So for a task requiring noting the time on a tourniquet, a high fidelity interaction of this would be something like grabbing a digital pen, holding it as a pen, writing by touching the tip of the virtual pen to the specific writing area of the virtual tourniquet and drawing appropriate numbers and characters. High fidelity interactions place an emphasis on immersion and details to create ideally a suspension of disbelief where the user feels they are actually using ‘real’ objects/dynamics. These are the most complex to create and have work reliably and while immersive if done well can become frustrating if not perfectly executed..

Medium fidelity interactions are a balance between simulating aspects of reality while minimizing the details. So for the above task example, the user could grab a virtual pen and touch it to the virtual tourniquet to trigger writing to appear automatically noting the time of touch. Medium fidelity is often a useful balance for a task that requires or benefits from user immersion yet does not require suspension of disbelief.

Low fidelity interactions are essentially binary or button press type interactions, with dwell time being a possible addition. For the tourniquet example the user would only need to click a button to note the time on the tourniquet. These are the simplest and most reliable interactions, yet they have the least immersion.

Deciding on the mixture of interaction fidelities is defined by the requirements of the system, and a user experience may be composed of mixtures of fidelity levels seamlessly based on need / implementation budget. There is evidence that medium fidelity interactions are less effective than either lower or higher fidelity interactions due to limitations of current XR hardware creating a kind of uncanny valley effect.

A further resource to explore some deeper science of interaction fidelity is available in this paper Interaction Fidelity: The Uncanny Valley of Virtual Reality Interactions.

Building Out A First Draft Scenario Prototype

As an example let’s make a scenario of needing to triage and provide care at the scene of an earthquake in a supermarket.

For the training, we will define a varied triage scenario with a breadth of medical emergencies, featuring a clearly choking person, a clearly injured person and an unknown status person. We will use low fidelity interactions and start with knowledge tooltips. This will be a purely virtual reality experience, so not considering mixed reality or co-location. The experience could support synchronous and/or asynchronous collaboration with an instructor.

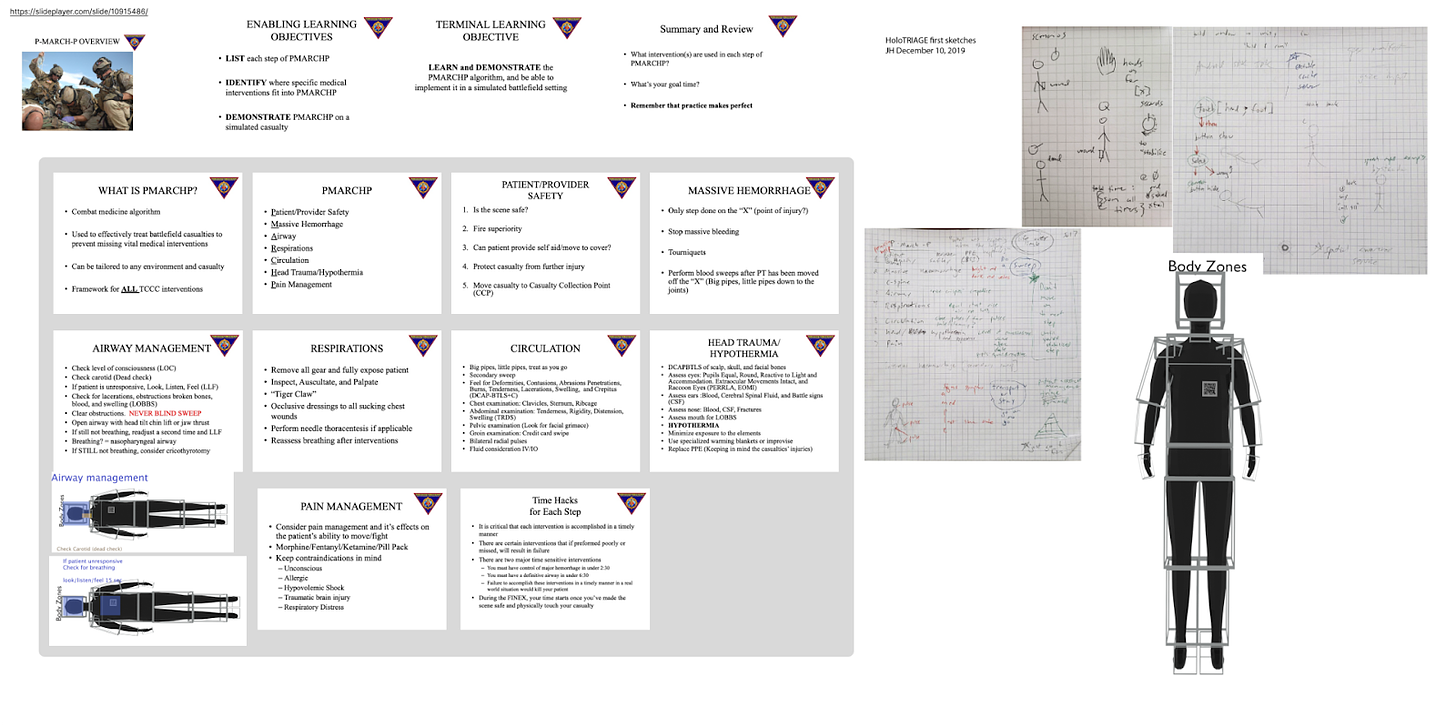

I always utilize a flowchart and related information, basic outline or storyboard. I like to put this into the background stage of Shapes so that I can reference it as I go along. The figma integration is really ideal for this as I can add more information as the project goes and just sync it into my working Shapes room.

In the reference figma artboard I’ve included the standard PMARCHP steps from a Marine Corps training slide deck, and some design notes for how I want to lay things out:

Then we can get into the unique steps of the scenario.

For triage, the order of operations is important so we can start with an overview, then a ‘test’ without any helper info. This can help ensure they are retaining the information and not just following along.

We can use the built in assets in Shapes to get started super fast, it has both background and set elements. Now in 2.0 there are many more options for default objects.

For initial setup and previsualization, it is often faster and easier to manipulate directly in 3D than using traditional 2D tools like Blender. You can always replace your assets later with more custom ones, but the staging itself is really nice to do immersive so you can see what your users will see the entire time you are working, rather than having to guess and check.

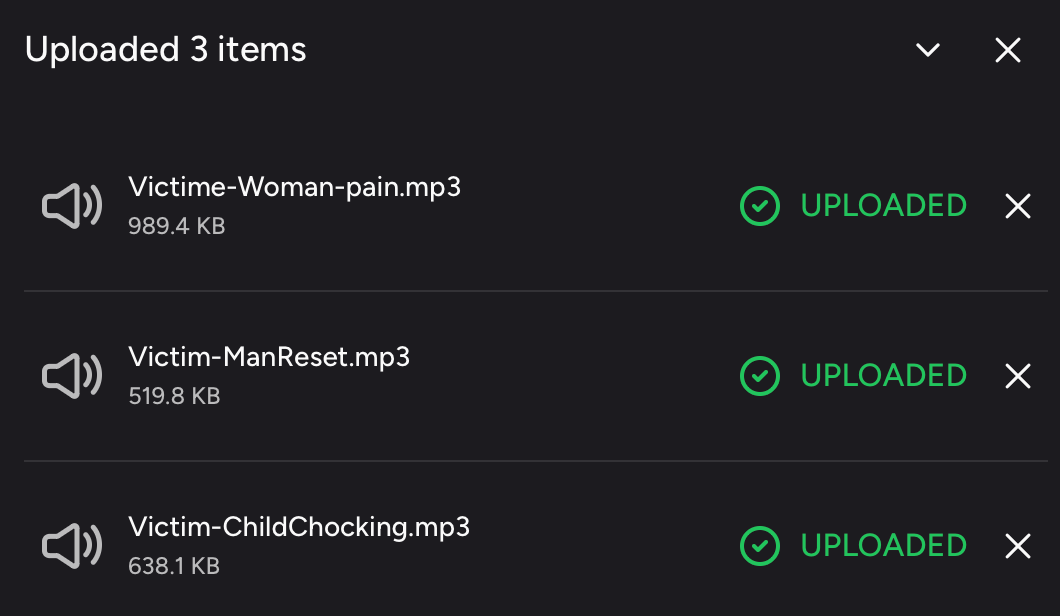

Shapes2 now allows us to add sounds, which is perfect for this scenario where listening for clues is important.

You add sounds via the web portal like any other asset, then in ShapesXR you can select what object is the emitter. This gives you spatialized audio, which is ideal for this scenario where some of the injured persons are not in easy eye sight. Part of medical training is listening for cues in the environment (orienting yourself spatially) and cues from the persons you are triaging and treating. Now we can do this directly in ShapesXR 2.0, a very useful feature.

After some time building out, we have our scene. This video shows a Timelapse of the creation and the end result. Below the end result video is linked directly too if you want to skip to that (10:10 timestamp).

Once we’ve set up our scenario, we can move the background elements to just the stages that they are relevant to, to become a kind of ‘landing page’ for what the experience will be about and breakdowns before the ‘test.’

Now we can go through our scenario and experience the immersion of using our ears and eyes to check for clues on how to triage patients effectively.

This is just the initial part of the experience, and many more details can be added. Remember to consider your learning objectives and target platforms to help decide your interaction fidelity and usage of contextual helper cues vs testing memorization.

Explore this prototype for yourself:

https://shapes.app/space/view/244fec78-38ae-41f0-b46b-c5df95fbc6b0/a54rscc7

Visit space code: a54rscc7

As a final example, see a demo of this kind of training experience made in 2019 ago for Hololens 2 using MRTK. Here I brought in more custom assets and wired up buttons for the states. This was a winning hackathon concept. Holotriage was meant for mixed reality to help trainees get acclimated to the real world they would be operating in.

You could even use ShapesXR to test your experience for compliance with different headset types, which is really helpful for making sure all your interface will fit into the given headset. Hololens 2 is very different from a Meta Quest with less than half the field of view, so your design has to take that into account.

Hopefully you can now see how to use ShapesXR and background research to design any kind of immersive training. All you need is the procedure and steps you are trying to teach, and some imagination about how best to convey the knowledge. You could even create experiences directly in ShapesXR as the final. Instead of a powerpoint, you can make a fully immersive interactive prototype.